Introduction: Why Trust Is the Foundation of Enterprise AI

Artificial intelligence is transforming how businesses operate, serve customers, and compete in the market. Generative AI tools are writing emails, summarizing support cases, drafting contracts, and delivering insights in seconds. But alongside this tremendous promise comes a serious question that every enterprise must answer before deploying AI at scale: Can we trust it with our data?

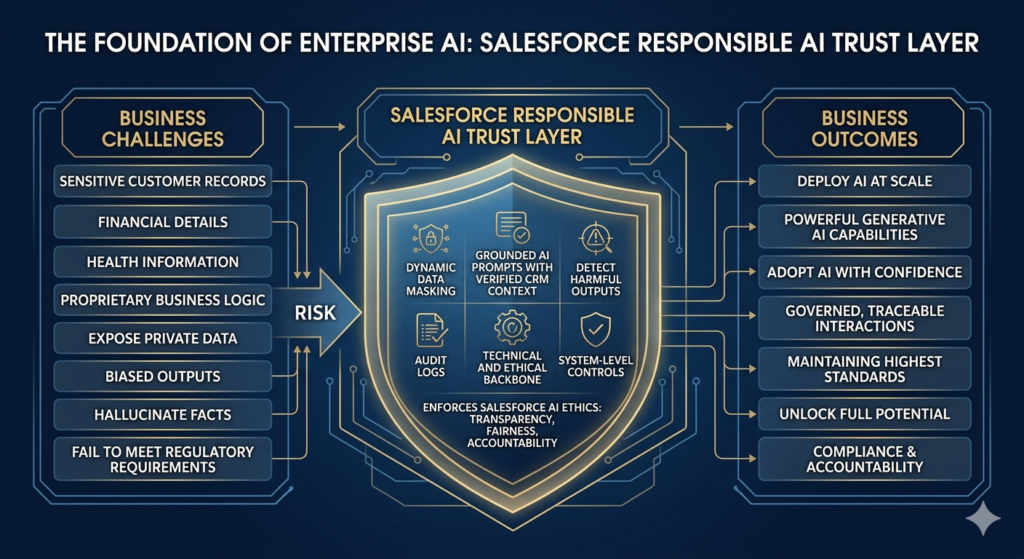

For most organizations, the answer has historically been complicated. Sensitive customer records, financial details, health information, and proprietary business logic cannot simply be handed over to a large language model (LLM) without protections in place. Without strong governance, AI systems can expose private data, produce biased outputs, hallucinate facts, or fail to meet regulatory requirements. These risks have slowed enterprise AI adoption and created friction between innovation teams and compliance departments.

This is precisely why Salesforce built the Salesforce Responsible AI Trust Layer — a purpose-built security and governance architecture that sits between your Salesforce data and the AI models powering generative features. The Trust Layer protects customer data while enabling powerful generative AI capabilities across Einstein, Agentforce, and the broader Salesforce platform.

At its core, the salesforce responsible AI trust layer is about making generative AI enterprise-ready without compromising privacy, compliance, or accountability. It is the technical and ethical backbone that enforces salesforce AI ethics in practice — transforming principles like transparency, fairness, and accountability into real system-level controls.

The trust layer Salesforce has designed is not simply a compliance checkbox. It is a living architecture that dynamically masks sensitive data, grounds AI prompts with verified CRM context, detects harmful outputs, and logs every interaction for audit purposes. It enables your teams to adopt AI with confidence, knowing that every AI interaction is governed, traceable, and aligned with your organization’s values and regulatory obligations.

In this guide, we will break down exactly how the Salesforce Trust Layer works, why it matters for responsible AI adoption, and how your organization can leverage it to unlock the full potential of AI while maintaining the highest standards of data integrity and ethics.

What Is the Salesforce Trust Layer?

The trust layer Salesforce has developed is a secure, multi-layered architecture embedded directly within the Einstein 1 Platform. It governs every interaction between Salesforce’s generative AI features and the large language models that power them — whether those are Salesforce’s own models or external providers like OpenAI, Google, Anthropic, or others available through the Einstein AI model gateway.

In practical terms, the Trust Layer is the intermediary that ensures your data never travels unsecured to an LLM. Every prompt that flows through Salesforce AI passes through the Trust Layer, where it is inspected, enriched with verified context, stripped of sensitive identifiers, and governed according to policies your organization defines.

The Technical Role of the Trust Layer

The Trust Layer performs several critical functions simultaneously:

- Secure data retrieval and grounding: It pulls relevant context from your CRM records, Data Cloud, and other authorized sources to build accurate, grounded prompts

- Dynamic data masking: It identifies and masks personally identifiable information (PII), financial data, health records, and other sensitive fields before any content reaches an external LLM

- Zero data retention enforcement: It ensures that prompts, responses, and any data involved in AI interactions are not stored by external model providers or used for model training

- Toxicity and safety filtering: It scans AI outputs for harmful, biased, or inappropriate content before responses are delivered to users

- Audit logging: It maintains complete records of every AI interaction, including the prompt, the response, the data sources used, and the user involved

How the Trust Layer Connects to Responsible AI

The Trust Layer is the operational expression of Salesforce’s broader commitment to salesforce responsible AI. Salesforce’s Responsible AI principles — covering accuracy, safety, honesty, transparency, empowerment, and sustainability — are not just aspirational statements. They are embedded into the platform architecture through the Trust Layer, which enforces these principles at every point of AI interaction.

Salesforce’s official Responsible AI documentation explains the company’s commitment to building AI that is accountable and human-centered. The Trust Layer is the technical mechanism that makes that commitment real.

Why Responsible AI Matters in Salesforce

Before diving deeper into the Trust Layer’s components, it is worth understanding why salesforce AI ethics matters so much in an enterprise context. The stakes for getting AI wrong in a business setting are far higher than in a consumer application.

Protecting Customer and Business Data

Salesforce holds some of the most sensitive data in an enterprise ecosystem — customer contact information, deal values, service histories, health records in healthcare deployments, and financial data in financial services implementations. Any AI feature that processes this data creates potential exposure if it is not properly secured. The Trust Layer ensures this data is governed at every step of the AI workflow.

Reducing Bias and Harmful Outputs

AI models trained on broad internet data can reflect and amplify existing biases. In a business context, this can mean discriminatory hiring recommendations, biased credit decisions, or unfair customer treatment. Responsible AI governance, enforced through the Trust Layer, includes toxicity detection and bias monitoring that help identify and mitigate these risks before they affect real customers or employees.

Improving Transparency and Explainability

One of the most significant barriers to enterprise AI adoption is the perception that AI is a black box. Employees and customers want to understand how AI decisions are made. The Trust Layer’s audit trail capabilities and grounding mechanisms make AI interactions more transparent and explainable, which is fundamental to salesforce AI ethics.

Supporting Regulatory Compliance

Regulations like GDPR, CCPA, HIPAA, and emerging AI-specific regulations require organizations to demonstrate accountability over automated decision-making. The Trust Layer helps organizations meet these requirements by enforcing data minimization, consent controls, and complete audit logging.

Building Confidence Among Employees and Customers

Perhaps most importantly, responsible AI governance builds trust. When employees know that AI is operating within clear ethical and security guardrails, they adopt it more readily. When customers know their data is protected, they are more willing to engage with AI-powered services. The salesforce responsible AI trust layer is ultimately a trust-building technology.

Core Components of the Salesforce Responsible AI Trust Layer

The salesforce responsible AI trust layer is composed of six primary functional components, each addressing a specific aspect of AI safety, privacy, and governance. Together, they create a comprehensive security perimeter around every generative AI interaction in the Salesforce platform.

1. Secure Data Retrieval and Grounding

The Trust Layer begins its work before a prompt ever reaches an LLM. When a user triggers an AI feature — such as asking Einstein to summarize a service case — the Trust Layer retrieves relevant, authorized data from Salesforce CRM records, Data Cloud, and other connected data sources. This data is used to ground the prompt, meaning it provides the factual context the AI needs to generate an accurate, relevant response.

Crucially, this retrieval process respects Salesforce’s existing access controls and permissions. Users can only ground prompts with data they are authorized to access. The Trust Layer enforces these permissions automatically, preventing unauthorized data exposure through AI interactions.

2. Dynamic Data Masking

Before the grounded prompt is sent to an LLM, the Trust Layer applies dynamic data masking. This process identifies sensitive data fields — including names, email addresses, Social Security numbers, financial figures, health information, and other PII — and replaces them with anonymized tokens or placeholders.

The LLM receives a functionally equivalent prompt for generating a useful response, but it never sees the actual sensitive values. When the response comes back, the Trust Layer reverses the masking process, restoring the correct values in context so the user receives a complete, accurate response.

3. Zero Data Retention with Model Providers

One of the most important protections in the salesforce responsible AI trust layer is its zero data retention architecture. Salesforce has negotiated agreements with its AI model providers that prohibit those providers from storing prompts, responses, or any data transmitted through the Trust Layer. This means your customer data is never used to train external AI models, and it is never retained on external infrastructure beyond the immediate processing window.

This protection is particularly critical for regulated industries where data residency and processing restrictions are legally mandated.

4. Toxicity Detection

The Trust Layer does not only govern what goes into an AI model — it also governs what comes out. Toxicity detection components analyze AI-generated responses before they are delivered to users, checking for harmful content, discriminatory language, inappropriate material, and responses that violate your organization’s defined policies.

Responses that fail toxicity checks are either blocked, revised, or flagged for human review, depending on the governance policies you have configured.

5. Audit Trails and Monitoring

Every AI interaction processed through the Trust Layer generates a detailed audit record. This includes the original user prompt, the data sources used for grounding, the masked prompt sent to the LLM, the raw response received, any toxicity detection results, and the final response delivered to the user. These logs are stored securely and can be accessed by administrators for compliance reviews, governance audits, and ongoing monitoring.

6. Policy Enforcement

The Trust Layer includes a policy engine that allows organizations to define custom governance rules for AI usage. These rules can restrict which AI features are available to which user groups, define approved data sources for grounding, set sensitivity thresholds for masking, configure toxicity detection sensitivity, and establish escalation paths for flagged interactions. Policy enforcement ensures that AI operates within the boundaries your organization has defined, regardless of which specific LLM is being used.

You can explore more about how these components work within Einstein AI’s broader architecture on our Einstein AI overview page.

How Prompt Grounding Works

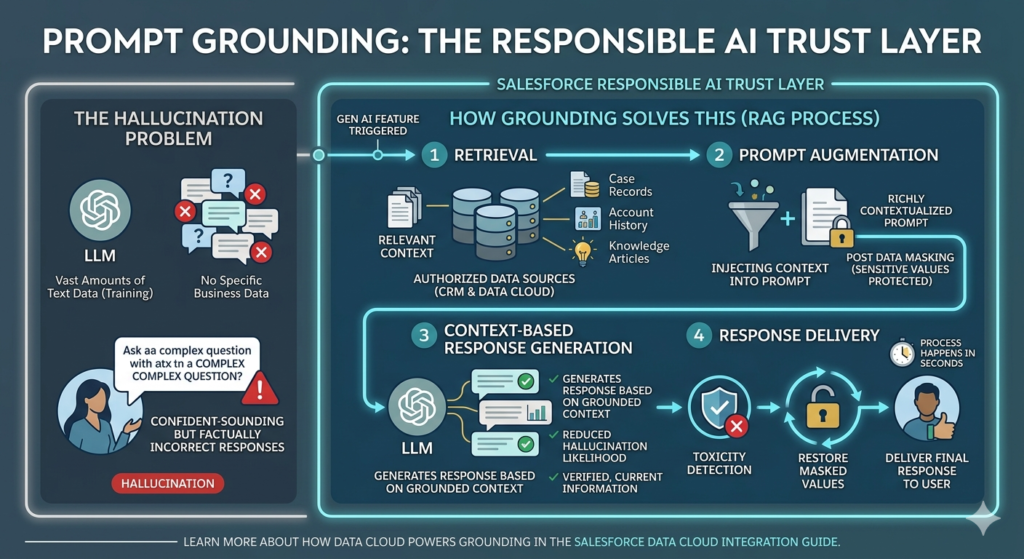

Prompt grounding is one of the most technically significant components of the salesforce responsible AI trust layer because it directly addresses one of the most cited concerns about generative AI: hallucination.

The Hallucination Problem

LLMs are trained on vast amounts of text data, which gives them impressive language capabilities. However, they do not have access to your specific business data, and they can generate confident-sounding but factually incorrect responses when asked questions that require current, specific, or proprietary knowledge. This is known as hallucination, and it is a serious problem in enterprise contexts where accuracy is critical.

How Grounding Solves This

The Trust Layer solves the hallucination problem through retrieval-augmented generation (RAG). Here is how the process works step by step:

Step 1: Retrieval

When a user triggers a generative AI feature, the Trust Layer queries authorized data sources to retrieve relevant context. For a service case summary, this might include the case record, account history, previous interactions, and related knowledge articles. For a sales email, it might include opportunity details, contact information, and relevant product data. This retrieval can draw from both Salesforce CRM data and Salesforce Data Cloud, which can aggregate data from external sources.

Step 2: Prompt Augmentation

The retrieved context is injected into the prompt sent to the LLM. Instead of asking the model a generic question, the Trust Layer sends a richly contextualized prompt that tells the model exactly what it needs to know to generate an accurate, relevant response. Critically, this augmentation happens after data masking, so sensitive values are protected even in augmented prompts.

Step 3: Context-Based Response Generation

The LLM generates its response based on the grounded context rather than relying solely on its training data. This dramatically reduces the likelihood of hallucinated facts because the model is working with verified, current information from your CRM.

Step 4: Response Delivery

The Trust Layer processes the response through toxicity detection, restores any masked values in appropriate context, and delivers the final response to the user. The entire process happens in seconds, with no perceptible delay in the user experience.

The result is AI-generated content that is not only more accurate and relevant but also more trustworthy. Learn more about how Data Cloud powers grounding by visiting our Salesforce Data Cloud integration guide.

Dynamic Data Masking

Dynamic data masking is one of the most visible and important protections within the salesforce responsible AI trust layer. It ensures that even when your CRM data is used to ground AI prompts, the sensitive elements within that data are never exposed to external model providers.

What Gets Masked?

The Trust Layer applies masking to a broad range of sensitive data categories:

Personally Identifiable Information (PII)

- Full names

- Email addresses

- Phone numbers

- Home addresses

- Social Security numbers

- Date of birth

- Government-issued ID numbers

Financial Information

- Account numbers

- Credit card information

- Revenue figures

- Deal values (in certain configurations)

- Banking details

Health-Related Information

- Medical record numbers

- Diagnosis codes

- Treatment information

- Insurance details

Business-Sensitive Information

- Contract terms and pricing

- Proprietary formulas or processes

- Strategic plans

- Merger and acquisition details

How Masking Works Technically

When the Trust Layer identifies a sensitive field, it replaces the actual value with a structured token — for example, replacing “John Smith” with “[PERSON_1]” and “john.smith@example.com” with “[EMAIL_1].” These tokens are consistent within a single prompt, meaning the LLM understands that [PERSON_1] and [EMAIL_1] refer to the same individual even without knowing their actual identity.

The LLM processes the masked prompt and generates a response using the tokens as placeholders. When the response returns to the Trust Layer, it re-maps the tokens back to their actual values before delivering the response to the user. The user sees a complete, personalized response, but the external model never processed the actual sensitive data.

This tokenization approach preserves the semantic and relational meaning of the data for AI processing while maintaining strict privacy protections — a sophisticated solution to what could otherwise be an intractable privacy problem.

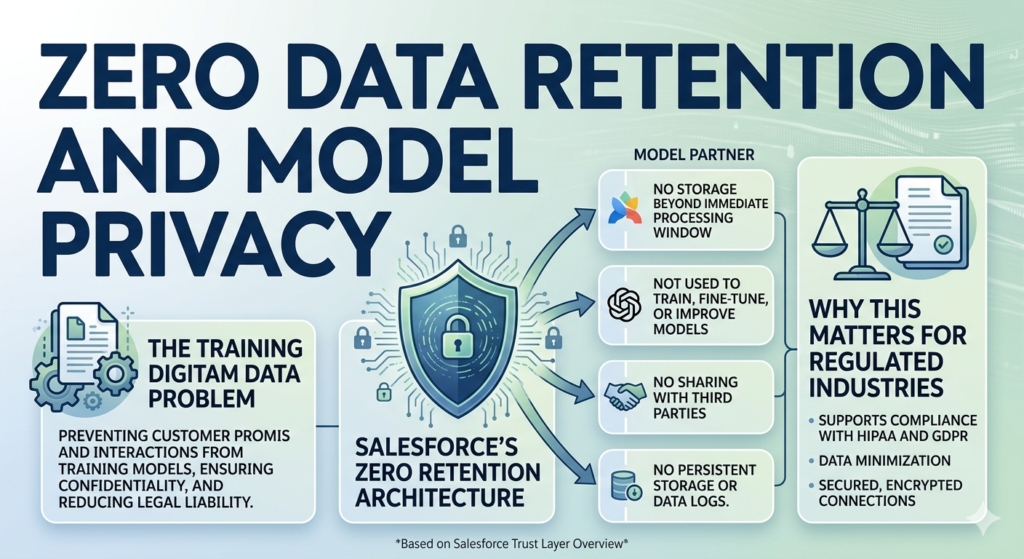

Zero Data Retention and Model Privacy

The trust layer Salesforce has architected includes a critical protection that goes beyond what most organizations think to ask about: what happens to their data after it has been processed by an AI model?

The Training Data Problem

Many AI service providers, by default, reserve the right to use customer prompts and interactions to improve their models. For a consumer application, this might be acceptable. For an enterprise handling sensitive customer data, proprietary information, or regulated data, it is potentially catastrophic. Data submitted to train a model could theoretically surface in responses to other users, violating confidentiality and potentially creating legal liability.

Salesforce’s Zero Retention Architecture

The Salesforce Trust Layer addresses this problem through contractual and technical zero data retention protections. Salesforce has established agreements with its AI model partners — including major providers available through the Einstein AI model gateway — that prohibit these providers from:

- Storing prompts or responses beyond the immediate processing window

- Using Salesforce customer data to train, fine-tune, or improve their models

- Sharing data with third parties

- Retaining data logs that could be accessed by unauthorized parties

On the technical side, the Trust Layer enforces these protections by transmitting data through secured, encrypted connections with no persistent storage at the model provider level. The entire transaction — prompt in, response out — is designed to leave no trace on external infrastructure.

Why This Matters for Regulated Industries

For organizations in healthcare, financial services, legal, and government sectors, zero data retention is not just a preference — it is often a legal requirement. HIPAA requires that business associates handle protected health information with strict controls on storage and processing. GDPR requires data minimization and limits on international data transfers. The Trust Layer’s zero retention architecture supports compliance with these and other regulations by ensuring that sensitive data does not persist beyond its intended use.

Salesforce’s Trust Layer overview provides additional technical detail on how these protections are implemented.

Toxicity Detection and Output Controls

Generating content with AI is fast and efficient, but it introduces a new category of risk: the possibility that AI outputs contain harmful, biased, or inappropriate content. The Trust Layer addresses this risk through a comprehensive toxicity detection and output control system.

What Toxicity Detection Covers

Harmful Content Filtering

The Trust Layer scans AI responses for content that could cause harm if delivered to users or customers. This includes violent or threatening language, instructions for harmful activities, content that demeans or dehumanizes individuals or groups, and other forms of harmful material.

Bias Detection

AI models can inadvertently reflect biases present in their training data. The Trust Layer includes bias detection mechanisms that identify responses that may contain discriminatory assumptions, stereotypes, or unfair characterizations related to protected characteristics including race, gender, religion, disability, and other categories.

Safety Scoring

Each AI response receives a safety score based on multiple factors, including toxicity level, potential for harm, alignment with defined policies, and confidence level. This score determines whether the response is delivered as-is, flagged for review, modified, or blocked entirely.

Response Blocking and Revision

When a response fails to meet safety thresholds, the Trust Layer has several options depending on the severity of the issue and the policies your organization has configured:

- Block: The response is not delivered to the user, and an appropriate message is displayed instead

- Revise: The response is automatically modified to remove or neutralize the problematic content

- Flag: The response is delivered but marked for human review in the audit log

- Escalate: The interaction is routed to a human supervisor for review before any response is delivered

Configuring Toxicity Controls

Organizations can configure their toxicity detection settings to match their specific context. A children’s education platform may apply much stricter content controls than a B2B financial services application. The Trust Layer’s policy engine allows administrators to tune these settings for their specific use case while maintaining baseline protections that cannot be disabled.

Audit Trail and Compliance Monitoring

For enterprises operating in regulated environments, the ability to demonstrate accountability over AI usage is not optional — it is a compliance requirement. The salesforce responsible AI trust layer provides comprehensive audit trail capabilities that give organizations complete visibility into every AI interaction.

What Gets Logged

The Trust Layer maintains detailed logs for each AI interaction, capturing:

Interaction Details

- Timestamp of the interaction

- User identity and role

- AI feature or agent involved

- The original user prompt (pre-grounding)

Processing Details

- Data sources accessed for grounding

- Fields that were masked and the masking tokens used

- The augmented prompt sent to the LLM (with masking applied)

- The LLM provider used

- Processing time and model response metadata

Output Details

- The raw response received from the LLM

- Toxicity detection results and scores

- Any modifications made to the response

- The final response delivered to the user

Policy Details

- Which governance policies were applied

- Any policy violations detected

- Actions taken in response to violations

Using Audit Trails for Compliance

These comprehensive logs support multiple compliance use cases:

Regulatory Audits: When a regulator requests evidence of how AI decisions were made or how data was processed, audit logs provide a complete, timestamped record of every interaction.

Internal Governance Reviews: Compliance teams can regularly review AI interaction logs to identify patterns, detect potential misuse, and assess whether governance policies are working as intended.

Incident Investigation: If an AI interaction produces an unexpected or problematic outcome, audit logs allow administrators to trace exactly what data was used, what prompt was sent, and what response was received — enabling rapid root cause analysis.

User Activity Monitoring: Administrators can track AI usage patterns by user, team, or feature, identifying training needs or potential misuse scenarios.

Data Lineage Documentation: Audit logs create a clear record of which data sources contributed to each AI interaction, supporting data lineage requirements under GDPR and other regulations.

Salesforce AI Ethics Principles

The salesforce responsible AI trust layer is the technical implementation of Salesforce’s broader salesforce AI ethics framework. Understanding these principles helps explain why the Trust Layer is designed the way it is and what values it is designed to protect.

Salesforce has articulated six core principles that guide the development and deployment of AI across its platform:

1. Accuracy

AI systems should produce responses and recommendations that are factually correct and contextually appropriate. The Trust Layer’s grounding mechanism directly supports this principle by anchoring AI responses to verified CRM data rather than relying solely on an LLM’s potentially outdated or inaccurate training knowledge.

2. Safety

AI interactions should not cause harm to individuals, organizations, or society. The Trust Layer’s toxicity detection, data masking, and output controls are all direct expressions of this principle, creating multiple layers of protection against harmful outcomes.

3. Honesty

AI systems should be transparent about their limitations and should not present generated content as more certain or authoritative than it actually is. The Trust Layer supports honesty by providing clear attribution for AI-generated content and by flagging outputs where confidence is low.

4. Transparency

Users and organizations should be able to understand how AI systems work and how decisions are made. The Trust Layer’s audit trail capabilities, grounding mechanisms, and explainability features support transparency by making AI interactions traceable and comprehensible.

5. Empowerment

AI should augment human capabilities rather than replace human judgment in critical decisions. The Trust Layer’s design consistently positions AI as a tool that generates suggestions for human review rather than autonomous decisions — users remain in control of the final output.

6. Sustainability

AI development and deployment should consider long-term environmental and social impacts. Salesforce’s approach to responsible AI includes commitments to energy-efficient model deployment and ongoing monitoring of societal impacts.

These principles, embedded in salesforce AI ethics, are not abstract ideals. They are operationalized through the Trust Layer’s specific technical capabilities, creating a direct line from organizational values to system behavior.

Real-World Use Cases

The salesforce responsible AI trust layer is not a theoretical construct — it is actively enabling secure, responsible AI across a wide range of enterprise use cases. Here are some of the most compelling examples.

Secure Service Case Summarization

Customer service agents handling dozens of cases per day can use Einstein’s case summarization feature to instantly generate a concise summary of a service case’s history, customer sentiment, and key details. The Trust Layer grounds this summary in the actual case record and communication history, masks any sensitive customer information before it reaches the LLM, and ensures that the resulting summary is accurate and appropriately protective of customer privacy.

Sales Email Generation

Sales representatives can use AI to draft personalized outreach emails based on opportunity data, account history, and contact information. The Trust Layer ensures that financial details, contract terms, and competitive intelligence are masked before being processed, while still providing enough context to generate a genuinely relevant, personalized email.

Knowledge Article Drafting

Support teams can accelerate knowledge management by using AI to draft new knowledge articles based on resolved cases. The Trust Layer grounds these drafts in verified resolution data and masks any customer-identifying information, ensuring that published knowledge articles do not inadvertently expose private customer details.

Contract Analysis

Legal and procurement teams can use AI to analyze contract documents, identifying key terms, obligations, and risk factors. The Trust Layer’s masking capabilities are particularly important here, protecting commercially sensitive terms, pricing details, and counterparty information while still enabling useful AI analysis.

Customer Support Automation

AI-powered chatbots and service agents built on Agentforce can handle customer inquiries using the Trust Layer to ground responses in the customer’s actual account and service history. This enables personalized, accurate support while ensuring that sensitive account details are handled according to strict privacy controls. Learn more about how Agentforce leverages the Trust Layer on our Agentforce implementation guide.

Trust Layer vs. Standard LLM Integrations

One of the most useful ways to understand the value of the salesforce responsible AI trust layer is to compare it directly to what a standard, unprotected LLM integration looks like. The table below illustrates the key differences:

| Capability | Trust Layer Salesforce | Standard LLM Integration |

|---|---|---|

| Dynamic Data Masking | ✅ Automatic, field-level masking of PII, financial, and health data before LLM processing | ❌ Raw data sent to LLM without masking unless manually implemented |

| Zero Data Retention | ✅ Contractual and technical protections prevent data storage and model training by providers | ❌ Most providers retain data by default; opt-out may be available but is not guaranteed |

| Prompt Grounding | ✅ Automated RAG with CRM and Data Cloud context, permission-aware retrieval | ⚠️ Possible through custom development but requires significant engineering effort and lacks access control integration |

| Auditability | ✅ Complete interaction logs including prompts, responses, data sources, masking actions, and policy applications | ❌ Minimal logging; typically limited to API call metadata |

| Toxicity Detection | ✅ Built-in output scanning with configurable thresholds, automatic blocking or revision | ⚠️ Available through some providers but not standardized or enterprise-configurable |

| Governance and Policy Engine | ✅ Configurable organizational policies for data access, masking rules, content standards, and escalation | ❌ No enterprise governance layer; must be built custom |

| Regulatory Compliance Support | ✅ Supports GDPR, CCPA, HIPAA, and other frameworks through technical controls and audit evidence | ❌ Compliance burden falls entirely on the implementing organization |

| Permission Integration | ✅ Respects Salesforce user roles, profiles, and sharing rules in data retrieval | ❌ No native integration with CRM permission models |

| Enterprise Readiness | ✅ Purpose-built for enterprise deployment with SLAs, support, and compliance documentation | ⚠️ Requires significant custom development to reach comparable enterprise readiness |

This comparison makes clear that building equivalent protections outside of the Trust Layer would require substantial engineering investment, ongoing maintenance, and significant compliance expertise. The Trust Layer delivers these capabilities as an integrated platform feature.

Benefits of the Salesforce Responsible AI Trust Layer

Organizations that adopt the salesforce responsible AI trust layer realize benefits across multiple dimensions:

Stronger Data Protection

The combination of dynamic masking, zero retention, and secure grounding creates multiple overlapping layers of data protection. Even if one protection were somehow circumvented, others would remain in place. This defense-in-depth approach provides far stronger protection than any single security measure.

Reduced AI Risk

By filtering harmful outputs, detecting bias, enforcing governance policies, and grounding responses in verified data, the Trust Layer dramatically reduces the most significant risks associated with enterprise AI deployment — from data leakage to reputational damage from harmful AI outputs.

Better Compliance Posture

The Trust Layer’s audit trail, data minimization controls, consent enforcement, and regulatory alignment features give compliance teams the evidence and controls they need to confidently support AI adoption. This reduces the compliance burden on IT and legal teams and accelerates regulatory review processes.

Increased User Trust

When employees and customers know that AI interactions are governed by clear ethical principles and technical controls, they are more willing to engage with AI-powered features. This increased trust translates directly into higher adoption rates and greater value realization from AI investments.

Faster AI Adoption

Paradoxically, stronger governance accelerates adoption. When trust and compliance concerns are addressed at the platform level, business teams can move forward with AI implementation without waiting for lengthy security reviews or custom compliance engineering. The Trust Layer removes the governance bottleneck that often delays enterprise AI projects.

Best Practices for Implementing Responsible AI

Successfully implementing the salesforce responsible AI trust layer requires more than simply enabling platform features. Here are the best practices that leading organizations follow:

1. Classify Your Sensitive Data First

Before configuring masking rules, conduct a comprehensive data classification exercise. Identify all sensitive fields in your Salesforce org — not just the obvious ones like Social Security numbers, but also business-sensitive fields like deal sizes, contract terms, and proprietary process details. Effective masking depends on knowing what needs to be masked.

2. Define Governance Policies Based on Use Cases

Different AI use cases carry different risk profiles. A customer-facing chatbot requires different governance policies than an internal sales coaching tool. Define policies that are appropriately calibrated to the sensitivity and risk level of each use case rather than applying a one-size-fits-all approach.

3. Monitor AI Outputs Continuously

Implement ongoing monitoring of AI interaction logs to detect patterns that may indicate policy gaps, model drift, or emerging risks. Set up automated alerts for high-toxicity scores, unusual access patterns, or frequent policy violations. Responsible AI is not a one-time configuration — it requires continuous oversight.

4. Train Users on Responsible AI Usage

Technology controls are important, but human behavior matters equally. Train AI users on responsible AI practices, including how to craft effective prompts, how to review and verify AI-generated content, and how to report concerns about AI outputs. A well-trained user is an additional layer of governance.

5. Review Compliance Requirements Regularly

AI regulations are evolving rapidly. GDPR enforcement priorities are changing, new AI-specific regulations are emerging in the EU, UK, and US, and industry-specific requirements continue to evolve. Build regular compliance reviews into your AI governance program to ensure that your Trust Layer configuration stays current with regulatory requirements.

6. Conduct Regular Governance Audits

Periodically review your AI governance framework in its entirety — not just individual policies, but the overall architecture, the data sources being used for grounding, the LLM models being deployed, and the escalation processes for policy violations. Use these audits to continuously improve your responsible AI posture.

Common Challenges and Solutions

Implementing the salesforce responsible AI trust layer effectively is not without challenges. Here are the most common obstacles organizations encounter and practical solutions for addressing them.

Challenge 1: Over-Masking Useful Context

The Problem: Setting masking rules too aggressively can strip so much context from prompts that the resulting AI responses are too generic to be useful. If every customer reference is masked, an AI-generated email may lose the personalization that makes it valuable.

The Solution: Implement tiered masking strategies that distinguish between different sensitivity levels. High-sensitivity fields like government ID numbers should always be masked, while moderately sensitive fields like names might be masked in some contexts but retained in others. Use role-based masking rules that adjust based on the user’s authorization level and the sensitivity of the interaction.

Challenge 2: Governance Complexity

The Problem: Large organizations with diverse AI use cases can quickly find themselves managing dozens of governance policies, each with different masking rules, toxicity thresholds, and data source configurations. This complexity can become difficult to manage and audit.

The Solution: Develop a governance framework with a clear taxonomy of AI use case categories, each with standardized policy templates. This reduces the number of unique policy configurations while still allowing appropriate customization. Designate a dedicated AI governance owner responsible for maintaining policy consistency.

Challenge 3: Model Selection and Governance Alignment

The Problem: Different LLMs have different capabilities, behaviors, and risk profiles. A model well-suited for creative writing may not be appropriate for compliance-sensitive legal analysis. Organizations may struggle to align model selection with governance requirements.

The Solution: Develop a model selection framework that matches specific LLMs to specific use case categories based on their demonstrated performance, safety characteristics, and compliance certifications. Leverage the Einstein AI model gateway, which allows you to select from pre-approved models with established Trust Layer integrations, rather than integrating models independently.

Challenge 4: Balancing Security and Usability

The Problem: Every governance control adds friction to the AI user experience. Aggressive masking, frequent human review requirements, and strict output blocking can make AI features feel slow and unhelpful, discouraging adoption.

The Solution: Design governance policies with user experience explicitly in mind. Apply the most stringent controls only where the risk genuinely warrants them. Invest in governance controls that operate transparently in the background rather than requiring frequent user interaction. Measure user satisfaction alongside security metrics to identify friction points and optimize accordingly.

Why Work with a Salesforce AI Partner

While the salesforce responsible AI trust layer provides powerful capabilities out of the box, realizing its full value requires expertise in both Salesforce platform configuration and enterprise AI governance. This is where working with an experienced Salesforce AI implementation partner makes a significant difference.

What a Salesforce AI Partner Brings

Trust Layer Configuration Expertise

Configuring masking rules, toxicity thresholds, and policy engines for complex enterprise environments requires deep platform knowledge. A specialized partner has implemented these configurations across multiple organizations and industries, bringing proven patterns and best practices that accelerate deployment and reduce configuration errors.

Secure Prompt Engineering

Effective generative AI depends on well-designed prompts. A Salesforce AI partner can design and test prompt templates that leverage the Trust Layer’s grounding capabilities effectively while avoiding patterns that produce poor or potentially harmful outputs.

Data Cloud Grounding Integration

Maximizing the accuracy of AI responses requires connecting Data Cloud’s unified customer data to the Trust Layer’s grounding mechanism. This integration involves data modeling, identity resolution, and permission configuration that benefits from specialized expertise.

Governance Framework Design

Building an enterprise AI governance framework goes beyond platform configuration. It requires cross-functional collaboration with legal, compliance, security, and business teams. An experienced partner can facilitate this process, drawing on frameworks they have developed across multiple client engagements.

Ongoing Optimization and Monitoring

Responsible AI is not a one-time deployment — it requires ongoing monitoring, tuning, and governance. A long-term implementation partner can provide managed services that continuously optimize your Trust Layer configuration, monitor AI performance and safety, and keep your governance framework current with evolving regulations.

Change Management and Training

Successful AI adoption requires people as much as technology. Implementation partners can design and deliver training programs, develop internal AI champions, and support the change management processes that drive sustained adoption.

If you are planning to implement or expand AI capabilities within Salesforce, partnering with a team that specializes in the Trust Layer and responsible AI governance is one of the most valuable investments you can make in the success of your AI program.

Conclusion: Enabling Confident AI Adoption with the Salesforce Trust Layer

The promise of generative AI in the enterprise is enormous. The ability to instantly summarize complex information, draft personalized communications, analyze documents, and automate routine interactions could transform productivity across every department and function. But realizing this promise requires more than deploying AI — it requires deploying AI responsibly.

The salesforce responsible AI trust layer is Salesforce’s answer to the central challenge of enterprise AI adoption: how do you unlock the power of large language models without exposing sensitive customer data, violating regulatory requirements, or producing harmful outputs? The Trust Layer addresses this challenge through a sophisticated, multi-layered architecture that encompasses secure data grounding, dynamic masking, zero retention protections, toxicity detection, comprehensive audit logging, and configurable policy enforcement.

Every component of the Trust Layer is an expression of salesforce AI ethics in practice — the translation of principles like accuracy, safety, honesty, transparency, empowerment, and sustainability into concrete technical controls. This is what responsible AI looks like at scale: not a promise, but a system.

For organizations considering or expanding their AI investments, the trust layer Salesforce has built provides a compelling foundation. It does not require you to choose between powerful AI capabilities and responsible governance. It delivers both simultaneously, through a platform-native architecture that protects data, enforces ethics, and maintains the complete audit record you need to operate with confidence in an increasingly regulated environment.

The result is AI adoption that moves faster, with lower risk, greater compliance confidence, and higher user trust than would be possible with any other approach. In a competitive landscape where AI capabilities are becoming a strategic differentiator, the organizations that get responsible AI right will be the ones that move furthest, fastest.

The Salesforce Responsible AI Trust Layer is how you get there.

About RizeX Labs

At RizeX Labs, we specialize in delivering cutting-edge Salesforce and AI solutions that help organizations adopt trusted, secure, and scalable artificial intelligence. Our expertise spans Agentforce, Einstein AI, Data Cloud, and the Salesforce Trust Layer, enabling businesses to innovate responsibly while protecting customer data.

We combine deep technical knowledge, industry best practices, and hands-on implementation experience to help companies deploy AI solutions that are transparent, compliant, and grounded in enterprise data.

We empower organizations to transform their AI strategy—from experimental use cases to production-ready, governed AI systems that drive efficiency, trust, and measurable business value.

Internal Linking Opportunities

External Linking Opportunities

- Salesforce

- Salesforce Trust Layer

- Salesforce Responsible AI Principles

- Salesforce Einstein AI

- Salesforce Agentforce

- Salesforce Data Cloud

- NIST AI RMF

Quick Summary

Salesforce Responsible AI is a framework that ensures artificial intelligence is secure, transparent, and aligned with ethical principles. At the center of this approach is the Salesforce Trust Layer, which provides data masking, grounding, toxicity detection, audit trails, and policy controls for every AI interaction.

By using the Trust Layer, organizations can safely deploy generative AI across customer service, sales, and marketing workflows without exposing sensitive CRM data. This architecture allows businesses to benefit from AI innovation while maintaining compliance, governance, and customer trust.

Quick Summary

The Salesforce Responsible AI Trust Layer is a comprehensive, enterprise-grade security and governance architecture embedded within the Einstein 1 Platform that acts as a protective intermediary between Salesforce CRM data and the large language models (LLMs) powering generative AI features across the platform. At its core, the Trust Layer solves the most critical challenge of enterprise AI adoption — how to harness the power of generative AI without exposing sensitive customer data, violating regulatory requirements, or producing harmful outputs — by combining six tightly integrated protective mechanisms: secure data retrieval and prompt grounding, dynamic data masking, zero data retention with external model providers, toxicity detection and output controls, comprehensive audit trail logging, and a configurable policy enforcement engine. The grounding mechanism uses retrieval-augmented generation (RAG) to pull verified, permission-aware context from Salesforce CRM records and Data Cloud before augmenting prompts, dramatically reducing AI hallucinations and ensuring responses are accurate and relevant. Dynamic data masking automatically identifies and tokenizes sensitive fields — including PII, financial data, health information, and contract terms — replacing them with anonymous placeholders before any data reaches an LLM, then seamlessly restoring actual values in the final response delivered to the user. Zero data retention protections, enforced through both contractual agreements and technical architecture, ensure that customer prompts and responses are never stored by external providers or used to train AI models, which is particularly critical for regulated industries governed by GDPR, HIPAA, and CCPA. Toxicity detection scans every AI-generated response for harmful content, bias, and policy violations before delivery, with configurable thresholds that allow organizations to block, revise, flag, or escalate problematic outputs based on their specific governance requirements. The audit trail system captures every dimension of each AI interaction — including the original prompt, data sources accessed, masking actions applied, the LLM used, toxicity scores, and the final response — creating a complete, timestamped compliance record that supports regulatory audits and internal governance reviews. Underpinning all of these technical controls are Salesforce's six core AI ethics principles — accuracy, safety, honesty, transparency, empowerment, and sustainability — which transform responsible AI from an aspirational framework into concrete, enforceable system behavior, ultimately enabling organizations across industries to adopt generative AI faster, with lower risk, stronger compliance posture, and greater confidence than any standard LLM integration could provide.